- [X] I have marked all applicable categories:

+ [X] exception-raising fix

+ [ ] algorithm implementation fix

+ [ ] documentation modification

+ [ ] new feature

- [X] I have reformatted the code using `make format` (**required**)

- [X] I have checked the code using `make commit-checks` (**required**)

- [ ] If applicable, I have mentioned the relevant/related issue(s)

- [ ] If applicable, I have listed every items in this Pull Request

below

The cause was the use of a lambda function in the state of a generated

object.

This PR closes#938. It introduces all the fundamental concepts and

abstractions, and it already covers the majority of the algorithms. It

is not a complete and finalised product, however, and we recommend that

the high-level API remain in alpha stadium for some time, as already

suggested in the issue.

The changes in this PR are described on a [wiki

page](https://github.com/aai-institute/tianshou/wiki/High-Level-API), a

copy of which is provided below. (The original page is perhaps more

readable, because it does not render line breaks verbatim.)

# Introducing the Tianshou High-Level API

The new high-level library was created based on object-oriented design

principles with two primary design goals:

* **ease of use** for the end user (without sacrificing generality)

This is achieved through:

* a single, well-defined point of interaction (`ExperimentBuilder`)

which uses declarative semantics, allowing the user to focus on

what to do rather than how to do it.

* easily injectible parametrisation.

For complex parametrisation involving objects, the respective

library classes are easily discoverable, keeping the need to

browse reference documentation - or, even worse, inspect code or class

hierarchies - to an absolute minimium.

* reduced points of failure.

Because the high-level API is at a higher level of abstraction, where

more knowledge is available, we can centrally define reasonable

defaults and apply consistency checks in order to ensure that

illegal configurations result in meaningful errors (and are completely

avoided as long as the users does not modify default behaviour).

For example, we can consider interactions between the nature of the

action space and the neural networks being used.

* **maintainability** for developers

This is achieved through:

* a modular design with strong separation of concerns

* a high level of factorisation, which largely avoids duplication,

partly through the use of mixins and multiple inheritance.

This invariably makes the code slightly more complex, yet it greatly

reduces the lines of code to be written/updated, so it is a reasonable

compromise in this case.

## Changeset

The entire high-level library is in its own subpackage

`tianshou.highlevel`

and **almost no changes were made to the original library** in order to

support the new APIs.

For the most part, only typing-related changes were made, which have

aligned type annotations with existing example applications or have made

explicit interfaces that were previously implicit.

Furthermore, some helper modules were added to the the `tianshou.util`

package

(all of which were copied from the [sensAI

library](https://github.com/jambit/sensAI)).

Many example applications were added, based on the existing MuJoCo and

Atari

examples (see below).

## User-Facing Interface

### User Experience Example

To illustrate the UX, consider this video recording (IntelliJ IDEA):

Observe how conveniently relevant classes can be discovered via the

IDE's

auto-completion function.

Discoverability is markedly enhanced by using a prefix-based naming

convention,

where classes that can be used as parameters use the base class name as

a prefix,

allowing all potentially relevant subclasses to be straightforwardly

auto-completed.

### Declarative Semantics

A key design principle for the user-facing interface was to achieve

*declarative semantics*, where the user

is no longer concerned with generating a lengthy procedure that

sequentially

constructs components that build upon each other.

Instead, the user focuses purely on

*declaring* the properties of the learning task he would like to run.

* This essentially reduces boiler-plate code to zero, as every part of

the

code is defining essential, experiment-specific configuration.

* This makes it possible to centrally handle interdependent

configuration

and detect/avoid misspecification.

In order to enable the configuration of interdependent objects without

requiring the user to instantiate the respective objects sequentially,

we

heavily employ the *factory pattern*.

### Experiment Builders

The end user's primary entry point is an `ExperimentBuilder`, which is

specialised for each algorithm.

As the name suggests, it uses the builder pattern in order to create

an `Experiment` object, which is then used to run the learning task.

* At builder construction, the user is required to provide only

essential

configuration, particularly the environment factory.

* The bulk of the algorithm-specific parameters can be provided

via an algorithm-specific parameter object.

For instance, `PPOExperimentBuilder` has the method `with_ppo_params`,

which expects an object of type `PPOParams`.

* Parametrisation that requires the provision of more complex interfaces

(e.g. were multiple specification variants exist) are handled via

dedicated builder methods.

For example, for the specification of the critic component in an

actor-critic algorithm, the following group of functions is provided:

* `with_critic_factory` (where the user can provide any (user-defined)

factory for the critic component)

* `with_critic_factory_default` (with which the user specifies that

the default, `Net`-based critic architecture shall be used and has the

option to parametrise it)

* `with_critic_factory_use_actor` (with which the user indicates that

the

critic component shall reuse the preprocessing network from the actor

component)

#### Examples

##### Minimal Example

In the simplest of cases, where the user wants to use the default

parametrisation for everything, a user could run a PPO learning task

as follows,

```python

experiment = PPOExperimentBuilder(MyEnvFactory()).build()

experiment.run()

```

where `MyEnvFactory` is a factory for the agent's environment.

The default behaviour will adapt depending on whether the factory

creates environments with discrete or continuous action spaces.

##### Fully Parametrised MuJoCo Example

Importantly, the user still has the option to configure all the details.

Consider this example, which is from the high-level version of the

`mujoco_ppo` example:

```python

log_name = os.path.join(task, "ppo", str(experiment_config.seed), datetime_tag())

sampling_config = SamplingConfig(

num_epochs=epoch,

step_per_epoch=step_per_epoch,

batch_size=batch_size,

num_train_envs=training_num,

num_test_envs=test_num,

buffer_size=buffer_size,

step_per_collect=step_per_collect,

repeat_per_collect=repeat_per_collect,

)

env_factory = MujocoEnvFactory(task, experiment_config.seed, obs_norm=True)

experiment = (

PPOExperimentBuilder(env_factory, experiment_config, sampling_config)

.with_ppo_params(

PPOParams(

discount_factor=gamma,

gae_lambda=gae_lambda,

action_bound_method=bound_action_method,

reward_normalization=rew_norm,

ent_coef=ent_coef,

vf_coef=vf_coef,

max_grad_norm=max_grad_norm,

value_clip=value_clip,

advantage_normalization=norm_adv,

eps_clip=eps_clip,

dual_clip=dual_clip,

recompute_advantage=recompute_adv,

lr=lr,

lr_scheduler_factory=LRSchedulerFactoryLinear(sampling_config)

if lr_decay

else None,

dist_fn=DistributionFunctionFactoryIndependentGaussians(),

),

)

.with_actor_factory_default(hidden_sizes, torch.nn.Tanh, continuous_unbounded=True)

.with_critic_factory_default(hidden_sizes, torch.nn.Tanh)

.build()

)

experiment.run(log_name)

```

This is functionally equivalent to the procedural, low-level example.

Compare the scripts here:

* [original low-level

example](https://github.com/aai-institute/tianshou/blob/feat/high-level-api/examples/mujoco/mujoco_ppo.py)

* [new high-level

example](https://github.com/aai-institute/tianshou/blob/feat/high-level-api/examples/mujoco/mujoco_ppo_hl.py)

In general, find example applications of the high-level API in the

`examples/`

folder in scripts using the `_hl.py` suffix:

* [MuJoCo

examples](https://github.com/aai-institute/tianshou/tree/feat/high-level-api/examples/mujoco)

* [Atari

examples](https://github.com/aai-institute/tianshou/tree/feat/high-level-api/examples/atari)

### Experiments

The `Experiment` representation contains

* the agent factory ,

* the environment factory,

* further definitions pertaining to storage & logging.

An exeriment may be run several times, assigning a name (and

corresponding

storage location) to each run.

#### Persistence and Logging

Experiments can be serialized and later be reloaded.

```python

experiment = Experiment.from_directory("log/my_experiment")

```

Because the experiment representation is composed purely of

configuration

and factories, which themselves are composed purely of configuration and

factories, persisted objects are compact and do not contain state.

Every experiment run produces the following artifacts:

* the serialized experiment

* the serialized best policy found during training

* a log file

* (optionally) user-defined data, as the persistence

handlers are modular

Running a reloaded experiment can optionally resume training of the

serialized

policy.

All relevant objects have meaningful string representations that can

appear

in logs, which is conveniently achieved through the use of

`ToStringMixin` (from sensAI).

Its use furthermore prevents string representations of recurring objects

from being printed more than once.

For example, consider this string representation, which was generated

for

the fully parametrised PPO experiment from the example above:

```

Experiment[

config=ExperimentConfig(

seed=42,

device='cuda',

policy_restore_directory=None,

train=True,

watch=True,

watch_render=0.0,

persistence_base_dir='log',

persistence_enabled=True),

sampling_config=SamplingConfig[

num_epochs=100,

step_per_epoch=30000,

batch_size=64,

num_train_envs=64,

num_test_envs=10,

buffer_size=4096,

step_per_collect=2048,

repeat_per_collect=10,

update_per_step=1.0,

start_timesteps=0,

start_timesteps_random=False,

replay_buffer_ignore_obs_next=False,

replay_buffer_save_only_last_obs=False,

replay_buffer_stack_num=1],

env_factory=MujocoEnvFactory[

task=Ant-v4,

seed=42,

obs_norm=True],

agent_factory=PPOAgentFactory[

sampling_config=SamplingConfig[<<],

optim_factory=OptimizerFactoryAdam[

weight_decay=0,

eps=1e-08,

betas=(0.9, 0.999)],

policy_wrapper_factory=None,

trainer_callbacks=TrainerCallbacks(

epoch_callback_train=None,

epoch_callback_test=None,

stop_callback=None),

params=PPOParams[

gae_lambda=0.95,

max_batchsize=256,

lr=0.0003,

lr_scheduler_factory=LRSchedulerFactoryLinear[sampling_config=SamplingConfig[<<]],

action_scaling=default,

action_bound_method=clip,

discount_factor=0.99,

reward_normalization=True,

deterministic_eval=False,

dist_fn=DistributionFunctionFactoryIndependentGaussians[],

vf_coef=0.25,

ent_coef=0.0,

max_grad_norm=0.5,

eps_clip=0.2,

dual_clip=None,

value_clip=False,

advantage_normalization=False,

recompute_advantage=True],

actor_factory=ActorFactoryTransientStorageDecorator[

actor_factory=ActorFactoryDefault[

continuous_actor_type=ContinuousActorType.GAUSSIAN,

continuous_unbounded=True,

continuous_conditioned_sigma=False,

hidden_sizes=[64, 64],

hidden_activation=<class 'torch.nn.modules.activation.Tanh'>,

discrete_softmax=True]],

critic_factory=CriticFactoryDefault[

hidden_sizes=[64, 64],

hidden_activation=<class 'torch.nn.modules.activation.Tanh'>],

critic_use_action=False],

logger_factory=LoggerFactoryDefault[

logger_type=tensorboard,

wandb_project=None],

env_config=None]

```

## Library Developer Perspective

The presentation thus far has focussed on the user's perspective.

From the perspective of a Tianshou developer, it is important that the

high-level API be clearly structured and maintainable.

Here are the most relevant representations:

* **Policy parameters** are represented as dataclasses (base class

`Params`).

The goal is for the parameters to be ultimately passed to the

corresponding

policy class (e.g. `PPOParams` contains parameters for `PPOPolicy`).

* **Parameter transformation**:

In part, the parameter dataclass attributes already correspond directly

to

policy class parameters.

However, because the high-level interface must, in many cases, abstract

away

from the low-level interface,

we establish the notion of a `ParamTransformer`, which transforms

one or more parameters into the form that is required by the policy

class:

The idea is that the dictionary representation of the dataclass is

successively transformed via `ParamTransformer`s such that the resulting

dictionary can ultimately be used as keyword arguments for the policy.

To achieve maintainability, the declaration of parameter transformations

is colocated with the parameters they affect.

Tests ensure that naming issues are detected.

* **Composition and inheritance**:

We use inheritance and mixins to reduce duplication.

* **Factories** are an essential principle of the library.

Because the creation of objects may depend on objects that are not

yet created, a declarative approach necessitates that we transition from

the objects themselves to factories.

* The `EnvFactory` was already mentioned above, as it is a user-facing

abstraction.

Its purpose is to create the (vectorized) `Environments` that will be

used in the experiments.

* An `AgentFactory` is the central component that creates the policy,

the trainer as well as the necessary collectors.

To support a new type of policy, a subclass that handles the policy

creation is required.

In turn, the main task when implementing a new algorithm-specific

`ExperimentBuilder` is the creation of the corresponding `AgentFactory`.

* Several types of factories serve to parametrize policies and training

processes, e.g.

* `OptimizerFactory` for the creation of torch optimizers

* `ActorFactory` for the creation of actor models

* `CriticFactory` for the creation of critic models

* `IntermediateModuleFactory` for the creation of models that produce

intermediate/latent representations

* `EnvParamFactory` for the creation of parameters based on properties

of the environment

* `NoiseFactory` for the creation of `BaseNoise` instances

* `DistributionFunctionFactory` for the creation of functions that

create torch distributions from tensors

* `LRSchedulerFactory` for learning rate schedulers

* `PolicyWrapperFactory` for policy wrappers that extend the

functionality of the regular policy (e.g. intrinsic curiosity)

* `AutoAlphaFactory` for automatically tuned regularization

coefficients (as supported by SAC or REDQ)

* A `LoggerFactory` handles the creation of the experiment logger,

but the default implementation already handles the cases that were

used in the examples.

* The `ExperimentBuilder` implementations make use of mixins to add

common

functionality. As mentioned above, the main task in an

algorithm-specific

specialization is to create the `AgentFactory`.

- [X] I have marked all applicable categories:

+ [ ] exception-raising fix

+ [ ] algorithm implementation fix

+ [X] documentation modification

+ [ ] new feature

- [X] I have reformatted the code using `make format` (**required**)

- [X] I have checked the code using `make commit-checks` (**required**)

- [X] If applicable, I have mentioned the relevant/related issue(s)

+ resolves issue #973

- [ ] If applicable, I have listed every items in this Pull Request

below

Closes#947

This removes all kwargs from all policy constructors. While doing that,

I also improved several names and added a whole lot of TODOs.

## Functional changes:

1. Added possibility to pass None as `critic2` and `critic2_optim`. In

fact, the default behavior then should cover the absolute majority of

cases

2. Added a function called `clone_optimizer` as a temporary measure to

support passing `critic2_optim=None`

## Breaking changes:

1. `action_space` is no longer optional. In fact, it already was

non-optional, as there was a ValueError in BasePolicy.init. So now

several examples were fixed to reflect that

2. `reward_normalization` removed from DDPG and children. It was never

allowed to pass it as `True` there, an error would have been raised in

`compute_n_step_reward`. Now I removed it from the interface

3. renamed `critic1` and similar to `critic`, in order to have uniform

interfaces. Note that the `critic` in DDPG was optional for the sole

reason that child classes used `critic1`. I removed this optionality

(DDPG can't do anything with `critic=None`)

4. Several renamings of fields (mostly private to public, so backwards

compatible)

## Additional changes:

1. Removed type and default declaration from docstring. This kind of

duplication is really not necessary

2. Policy constructors are now only called using named arguments, not a

fragile mixture of positional and named as before

5. Minor beautifications in typing and code

6. Generally shortened docstrings and made them uniform across all

policies (hopefully)

## Comment:

With these changes, several problems in tianshou's inheritance hierarchy

become more apparent. I tried highlighting them for future work.

---------

Co-authored-by: Dominik Jain <d.jain@appliedai.de>

Close#941

rtfd build link:

https://readthedocs.org/projects/tianshou/builds/22019877/

Also -- fix two small issues reported by users, see #928 and #930

Note: I created the branch in thu-ml:tianshou instead of

Trinkle23897:tianshou to quickly check the rtfd build. It's not a good

process since every commit would trigger twice CI pipelines :(

Closes#914

Additional changes:

- Deprecate python below 11

- Remove 3rd party and throughput tests. This simplifies install and

test pipeline

- Remove gym compatibility and shimmy

- Format with 3.11 conventions. In particular, add `zip(...,

strict=True/False)` where possible

Since the additional tests and gym were complicating the CI pipeline

(flaky and dist-dependent), it didn't make sense to work on fixing the

current tests in this PR to then just delete them in the next one. So

this PR changes the build and removes these tests at the same time.

Preparation for #914 and #920

Changes formatting to ruff and black. Remove python 3.8

## Additional Changes

- Removed flake8 dependencies

- Adjusted pre-commit. Now CI and Make use pre-commit, reducing the

duplication of linting calls

- Removed check-docstyle option (ruff is doing that)

- Merged format and lint. In CI the format-lint step fails if any

changes are done, so it fulfills the lint functionality.

---------

Co-authored-by: Jiayi Weng <jiayi@openai.com>

# Goals of the PR

The PR introduces **no changes to functionality**, apart from improved

input validation here and there. The main goals are to reduce some

complexity of the code, to improve types and IDE completions, and to

extend documentation and block comments where appropriate. Because of

the change to the trainer interfaces, many files are affected (more

details below), but still the overall changes are "small" in a certain

sense.

## Major Change 1 - BatchProtocol

**TL;DR:** One can now annotate which fields the batch is expected to

have on input params and which fields a returned batch has. Should be

useful for reading the code. getting meaningful IDE support, and

catching bugs with mypy. This annotation strategy will continue to work

if Batch is replaced by TensorDict or by something else.

**In more detail:** Batch itself has no fields and using it for

annotations is of limited informational power. Batches with fields are

not separate classes but instead instances of Batch directly, so there

is no type that could be used for annotation. Fortunately, python

`Protocol` is here for the rescue. With these changes we can now do

things like

```python

class ActionBatchProtocol(BatchProtocol):

logits: Sequence[Union[tuple, torch.Tensor]]

dist: torch.distributions.Distribution

act: torch.Tensor

state: Optional[torch.Tensor]

class RolloutBatchProtocol(BatchProtocol):

obs: torch.Tensor

obs_next: torch.Tensor

info: Dict[str, Any]

rew: torch.Tensor

terminated: torch.Tensor

truncated: torch.Tensor

class PGPolicy(BasePolicy):

...

def forward(

self,

batch: RolloutBatchProtocol,

state: Optional[Union[dict, Batch, np.ndarray]] = None,

**kwargs: Any,

) -> ActionBatchProtocol:

```

The IDE and mypy are now very helpful in finding errors and in

auto-completion, whereas before the tools couldn't assist in that at

all.

## Major Change 2 - remove duplication in trainer package

**TL;DR:** There was a lot of duplication between `BaseTrainer` and its

subclasses. Even worse, it was almost-duplication. There was also

interface fragmentation through things like `onpolicy_trainer`. Now this

duplication is gone and all downstream code was adjusted.

**In more detail:** Since this change affects a lot of code, I would

like to explain why I thought it to be necessary.

1. The subclasses of `BaseTrainer` just duplicated docstrings and

constructors. What's worse, they changed the order of args there, even

turning some kwargs of BaseTrainer into args. They also had the arg

`learning_type` which was passed as kwarg to the base class and was

unused there. This made things difficult to maintain, and in fact some

errors were already present in the duplicated docstrings.

2. The "functions" a la `onpolicy_trainer`, which just called the

`OnpolicyTrainer.run`, not only introduced interface fragmentation but

also completely obfuscated the docstring and interfaces. They themselves

had no dosctring and the interface was just `*args, **kwargs`, which

makes it impossible to understand what they do and which things can be

passed without reading their implementation, then reading the docstring

of the associated class, etc. Needless to say, mypy and IDEs provide no

support with such functions. Nevertheless, they were used everywhere in

the code-base. I didn't find the sacrifices in clarity and complexity

justified just for the sake of not having to write `.run()` after

instantiating a trainer.

3. The trainers are all very similar to each other. As for my

application I needed a new trainer, I wanted to understand their

structure. The similarity, however, was hard to discover since they were

all in separate modules and there was so much duplication. I kept

staring at the constructors for a while until I figured out that

essentially no changes to the superclass were introduced. Now they are

all in the same module and the similarities/differences between them are

much easier to grasp (in my opinion)

4. Because of (1), I had to manually change and check a lot of code,

which was very tedious and boring. This kind of work won't be necessary

in the future, since now IDEs can be used for changing signatures,

renaming args and kwargs, changing class names and so on.

I have some more reasons, but maybe the above ones are convincing

enough.

## Minor changes: improved input validation and types

I added input validation for things like `state` and `action_scaling`

(which only makes sense for continuous envs). After adding this, some

tests failed to pass this validation. There I added

`action_scaling=isinstance(env.action_space, Box)`, after which tests

were green. I don't know why the tests were green before, since action

scaling doesn't make sense for discrete actions. I guess some aspect was

not tested and didn't crash.

I also added Literal in some places, in particular for

`action_bound_method`. Now it is no longer allowed to pass an empty

string, instead one should pass `None`. Also here there is input

validation with clear error messages.

@Trinkle23897 The functional tests are green. I didn't want to fix the

formatting, since it will change in the next PR that will solve #914

anyway. I also found a whole bunch of code in `docs/_static`, which I

just deleted (shouldn't it be copied from the sources during docs build

instead of committed?). I also haven't adjusted the documentation yet,

which atm still mentions the trainers of the type

`onpolicy_trainer(...)` instead of `OnpolicyTrainer(...).run()`

## Breaking Changes

The adjustments to the trainer package introduce breaking changes as

duplicated interfaces are deleted. However, it should be very easy for

users to adjust to them

---------

Co-authored-by: Michael Panchenko <m.panchenko@appliedai.de>

Changes:

- Disclaimer in README

- Replaced all occurences of Gym with Gymnasium

- Removed code that is now dead since we no longer need to support the

old step API

- Updated type hints to only allow new step API

- Increased required version of envpool to support Gymnasium

- Increased required version of PettingZoo to support Gymnasium

- Updated `PettingZooEnv` to only use the new step API, removed hack to

also support old API

- I had to add some `# type: ignore` comments, due to new type hinting

in Gymnasium. I'm not that familiar with type hinting but I believe that

the issue is on the Gymnasium side and we are looking into it.

- Had to update `MyTestEnv` to support `options` kwarg

- Skip NNI tests because they still use OpenAI Gym

- Also allow `PettingZooEnv` in vector environment

- Updated doc page about ReplayBuffer to also talk about terminated and

truncated flags.

Still need to do:

- Update the Jupyter notebooks in docs

- Check the entire code base for more dead code (from compatibility

stuff)

- Check the reset functions of all environments/wrappers in code base to

make sure they use the `options` kwarg

- Someone might want to check test_env_finite.py

- Is it okay to allow `PettingZooEnv` in vector environments? Might need

to update docs?

## implementation

I implemented HER solely as a replay buffer. It is done by temporarily

directly re-writing transitions storage (`self._meta`) during the

`sample_indices()` call. The original transitions are cached and will be

restored at the beginning of the next sampling or when other methods is

called. This will make sure that. for example, n-step return calculation

can be done without altering the policy.

There is also a problem with the original indices sampling. The sampled

indices are not guaranteed to be from different episodes. So I decided

to perform re-writing based on the episode. This guarantees that the

sampled transitions from the same episode will have the same re-written

goal. This also make the re-writing ratio calculation slightly differ

from the paper, but it won't be too different if there are many episodes

in the buffer.

In the current commit, HER replay buffer only support 'future' strategy

and online sampling. This is the best of HER in term of performance and

memory efficiency.

I also add a few more convenient replay buffers

(`HERVectorReplayBuffer`, `HERReplayBufferManager`), test env

(`MyGoalEnv`), gym wrapper (`TruncatedAsTerminated`), unit tests, and a

simple example (examples/offline/fetch_her_ddpg.py).

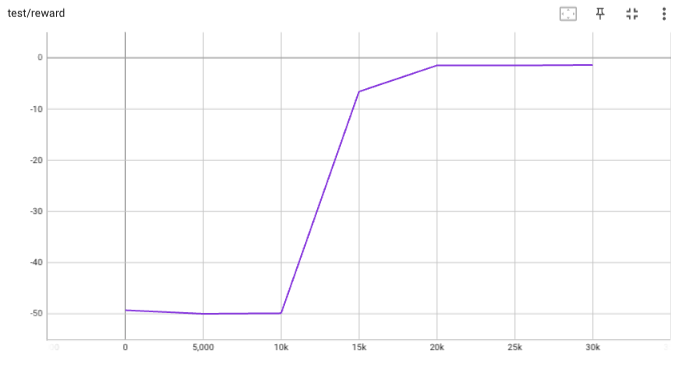

## verification

I have added unit tests for almost everything I have implemented.

HER replay buffer was also tested using DDPG on [`FetchReach-v3`

env](https://github.com/Farama-Foundation/Gymnasium-Robotics). I used

default DDPG parameters from mujoco example and didn't tune anything

further to get this good result! (train script:

examples/offline/fetch_her_ddpg.py).

- This PR adds the checks that are defined in the Makefile as pre-commit

hooks.

- Hopefully, the checks are equivalent to those from the Makefile, but I

can't guarantee it.

- CI remains as it is.

- As I pointed out on discord, I experienced some conflicts between

flake8 and yapf, so it might be better to transition to some other

combination (e.g. black).

The new proposed feature is to have trainers as generators.

The usage pattern is:

```python

trainer = OnPolicyTrainer(...)

for epoch, epoch_stat, info in trainer:

print(f"Epoch: {epoch}")

print(epoch_stat)

print(info)

do_something_with_policy()

query_something_about_policy()

make_a_plot_with(epoch_stat)

display(info)

```

- epoch int: the epoch number

- epoch_stat dict: a large collection of metrics of the current epoch, including stat

- info dict: the usual dict out of the non-generator version of the trainer

You can even iterate on several different trainers at the same time:

```python

trainer1 = OnPolicyTrainer(...)

trainer2 = OnPolicyTrainer(...)

for result1, result2, ... in zip(trainer1, trainer2, ...):

compare_results(result1, result2, ...)

```

Co-authored-by: Jiayi Weng <trinkle23897@gmail.com>

* Use `global_step` as the x-axis for wandb

* Use Tensorboard SummaryWritter as core with `wandb.init(..., sync_tensorboard=True)`

* Update all atari examples with wandb

Co-authored-by: Jiayi Weng <trinkle23897@gmail.com>

- change the internal API name of worker: send_action -> send, get_result -> recv (align with envpool)

- add a timing test for venvs.reset() to make sure the concurrent execution

- change venvs.reset() logic

Co-authored-by: Jiayi Weng <trinkle23897@gmail.com>

This PR implements BCQPolicy, which could be used to train an offline agent in the environment of continuous action space. An experimental result 'halfcheetah-expert-v1' is provided, which is a d4rl environment (for Offline Reinforcement Learning).

Example usage is in the examples/offline/offline_bcq.py.