## implementation

I implemented HER solely as a replay buffer. It is done by temporarily

directly re-writing transitions storage (`self._meta`) during the

`sample_indices()` call. The original transitions are cached and will be

restored at the beginning of the next sampling or when other methods is

called. This will make sure that. for example, n-step return calculation

can be done without altering the policy.

There is also a problem with the original indices sampling. The sampled

indices are not guaranteed to be from different episodes. So I decided

to perform re-writing based on the episode. This guarantees that the

sampled transitions from the same episode will have the same re-written

goal. This also make the re-writing ratio calculation slightly differ

from the paper, but it won't be too different if there are many episodes

in the buffer.

In the current commit, HER replay buffer only support 'future' strategy

and online sampling. This is the best of HER in term of performance and

memory efficiency.

I also add a few more convenient replay buffers

(`HERVectorReplayBuffer`, `HERReplayBufferManager`), test env

(`MyGoalEnv`), gym wrapper (`TruncatedAsTerminated`), unit tests, and a

simple example (examples/offline/fetch_her_ddpg.py).

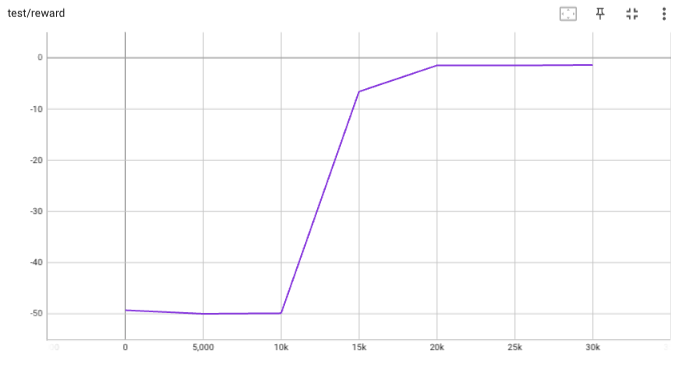

## verification

I have added unit tests for almost everything I have implemented.

HER replay buffer was also tested using DDPG on [`FetchReach-v3`

env](https://github.com/Farama-Foundation/Gymnasium-Robotics). I used

default DDPG parameters from mujoco example and didn't tune anything

further to get this good result! (train script:

examples/offline/fetch_her_ddpg.py).

- This PR adds the checks that are defined in the Makefile as pre-commit

hooks.

- Hopefully, the checks are equivalent to those from the Makefile, but I

can't guarantee it.

- CI remains as it is.

- As I pointed out on discord, I experienced some conflicts between

flake8 and yapf, so it might be better to transition to some other

combination (e.g. black).

The new proposed feature is to have trainers as generators.

The usage pattern is:

```python

trainer = OnPolicyTrainer(...)

for epoch, epoch_stat, info in trainer:

print(f"Epoch: {epoch}")

print(epoch_stat)

print(info)

do_something_with_policy()

query_something_about_policy()

make_a_plot_with(epoch_stat)

display(info)

```

- epoch int: the epoch number

- epoch_stat dict: a large collection of metrics of the current epoch, including stat

- info dict: the usual dict out of the non-generator version of the trainer

You can even iterate on several different trainers at the same time:

```python

trainer1 = OnPolicyTrainer(...)

trainer2 = OnPolicyTrainer(...)

for result1, result2, ... in zip(trainer1, trainer2, ...):

compare_results(result1, result2, ...)

```

Co-authored-by: Jiayi Weng <trinkle23897@gmail.com>

* Use `global_step` as the x-axis for wandb

* Use Tensorboard SummaryWritter as core with `wandb.init(..., sync_tensorboard=True)`

* Update all atari examples with wandb

Co-authored-by: Jiayi Weng <trinkle23897@gmail.com>

- change the internal API name of worker: send_action -> send, get_result -> recv (align with envpool)

- add a timing test for venvs.reset() to make sure the concurrent execution

- change venvs.reset() logic

Co-authored-by: Jiayi Weng <trinkle23897@gmail.com>

This PR implements BCQPolicy, which could be used to train an offline agent in the environment of continuous action space. An experimental result 'halfcheetah-expert-v1' is provided, which is a d4rl environment (for Offline Reinforcement Learning).

Example usage is in the examples/offline/offline_bcq.py.

- Batch: do not raise error when it finds list of np.array with different shape[0].

- Venv's obs: add try...except block for np.stack(obs_list)

- remove venv.__del__ since it is buggy