- [X] I have marked all applicable categories:

+ [ ] exception-raising fix

+ [ ] algorithm implementation fix

+ [ ] documentation modification

+ [X] new feature

- [X] I have reformatted the code using `make format` (**required**)

- [X] I have checked the code using `make commit-checks` (**required**)

- [X] If applicable, I have mentioned the relevant/related issue(s)

- [X] If applicable, I have listed every items in this Pull Request

below

Close#941

rtfd build link:

https://readthedocs.org/projects/tianshou/builds/22019877/

Also -- fix two small issues reported by users, see #928 and #930

Note: I created the branch in thu-ml:tianshou instead of

Trinkle23897:tianshou to quickly check the rtfd build. It's not a good

process since every commit would trigger twice CI pipelines :(

Closes#914

Additional changes:

- Deprecate python below 11

- Remove 3rd party and throughput tests. This simplifies install and

test pipeline

- Remove gym compatibility and shimmy

- Format with 3.11 conventions. In particular, add `zip(...,

strict=True/False)` where possible

Since the additional tests and gym were complicating the CI pipeline

(flaky and dist-dependent), it didn't make sense to work on fixing the

current tests in this PR to then just delete them in the next one. So

this PR changes the build and removes these tests at the same time.

Preparation for #914 and #920

Changes formatting to ruff and black. Remove python 3.8

## Additional Changes

- Removed flake8 dependencies

- Adjusted pre-commit. Now CI and Make use pre-commit, reducing the

duplication of linting calls

- Removed check-docstyle option (ruff is doing that)

- Merged format and lint. In CI the format-lint step fails if any

changes are done, so it fulfills the lint functionality.

---------

Co-authored-by: Jiayi Weng <jiayi@openai.com>

# Goals of the PR

The PR introduces **no changes to functionality**, apart from improved

input validation here and there. The main goals are to reduce some

complexity of the code, to improve types and IDE completions, and to

extend documentation and block comments where appropriate. Because of

the change to the trainer interfaces, many files are affected (more

details below), but still the overall changes are "small" in a certain

sense.

## Major Change 1 - BatchProtocol

**TL;DR:** One can now annotate which fields the batch is expected to

have on input params and which fields a returned batch has. Should be

useful for reading the code. getting meaningful IDE support, and

catching bugs with mypy. This annotation strategy will continue to work

if Batch is replaced by TensorDict or by something else.

**In more detail:** Batch itself has no fields and using it for

annotations is of limited informational power. Batches with fields are

not separate classes but instead instances of Batch directly, so there

is no type that could be used for annotation. Fortunately, python

`Protocol` is here for the rescue. With these changes we can now do

things like

```python

class ActionBatchProtocol(BatchProtocol):

logits: Sequence[Union[tuple, torch.Tensor]]

dist: torch.distributions.Distribution

act: torch.Tensor

state: Optional[torch.Tensor]

class RolloutBatchProtocol(BatchProtocol):

obs: torch.Tensor

obs_next: torch.Tensor

info: Dict[str, Any]

rew: torch.Tensor

terminated: torch.Tensor

truncated: torch.Tensor

class PGPolicy(BasePolicy):

...

def forward(

self,

batch: RolloutBatchProtocol,

state: Optional[Union[dict, Batch, np.ndarray]] = None,

**kwargs: Any,

) -> ActionBatchProtocol:

```

The IDE and mypy are now very helpful in finding errors and in

auto-completion, whereas before the tools couldn't assist in that at

all.

## Major Change 2 - remove duplication in trainer package

**TL;DR:** There was a lot of duplication between `BaseTrainer` and its

subclasses. Even worse, it was almost-duplication. There was also

interface fragmentation through things like `onpolicy_trainer`. Now this

duplication is gone and all downstream code was adjusted.

**In more detail:** Since this change affects a lot of code, I would

like to explain why I thought it to be necessary.

1. The subclasses of `BaseTrainer` just duplicated docstrings and

constructors. What's worse, they changed the order of args there, even

turning some kwargs of BaseTrainer into args. They also had the arg

`learning_type` which was passed as kwarg to the base class and was

unused there. This made things difficult to maintain, and in fact some

errors were already present in the duplicated docstrings.

2. The "functions" a la `onpolicy_trainer`, which just called the

`OnpolicyTrainer.run`, not only introduced interface fragmentation but

also completely obfuscated the docstring and interfaces. They themselves

had no dosctring and the interface was just `*args, **kwargs`, which

makes it impossible to understand what they do and which things can be

passed without reading their implementation, then reading the docstring

of the associated class, etc. Needless to say, mypy and IDEs provide no

support with such functions. Nevertheless, they were used everywhere in

the code-base. I didn't find the sacrifices in clarity and complexity

justified just for the sake of not having to write `.run()` after

instantiating a trainer.

3. The trainers are all very similar to each other. As for my

application I needed a new trainer, I wanted to understand their

structure. The similarity, however, was hard to discover since they were

all in separate modules and there was so much duplication. I kept

staring at the constructors for a while until I figured out that

essentially no changes to the superclass were introduced. Now they are

all in the same module and the similarities/differences between them are

much easier to grasp (in my opinion)

4. Because of (1), I had to manually change and check a lot of code,

which was very tedious and boring. This kind of work won't be necessary

in the future, since now IDEs can be used for changing signatures,

renaming args and kwargs, changing class names and so on.

I have some more reasons, but maybe the above ones are convincing

enough.

## Minor changes: improved input validation and types

I added input validation for things like `state` and `action_scaling`

(which only makes sense for continuous envs). After adding this, some

tests failed to pass this validation. There I added

`action_scaling=isinstance(env.action_space, Box)`, after which tests

were green. I don't know why the tests were green before, since action

scaling doesn't make sense for discrete actions. I guess some aspect was

not tested and didn't crash.

I also added Literal in some places, in particular for

`action_bound_method`. Now it is no longer allowed to pass an empty

string, instead one should pass `None`. Also here there is input

validation with clear error messages.

@Trinkle23897 The functional tests are green. I didn't want to fix the

formatting, since it will change in the next PR that will solve #914

anyway. I also found a whole bunch of code in `docs/_static`, which I

just deleted (shouldn't it be copied from the sources during docs build

instead of committed?). I also haven't adjusted the documentation yet,

which atm still mentions the trainers of the type

`onpolicy_trainer(...)` instead of `OnpolicyTrainer(...).run()`

## Breaking Changes

The adjustments to the trainer package introduce breaking changes as

duplicated interfaces are deleted. However, it should be very easy for

users to adjust to them

---------

Co-authored-by: Michael Panchenko <m.panchenko@appliedai.de>

At the moment, WandbLogger is always using wandb.init with monitor_gym =

True.

This fails when OpenAI's gym is not installed, which doesn't make sense

after the transition to Gymnasium.

I am using Tianshou with non-standard RL environment, which adhere to

Gymnasium API, and the current code is throwing exceptions.

I suggest to make it a controllable parameter. I left the default value

to True (to make it functionally the same for people using gym). It may

also make sense to change the default to False.

**The assert was missing a description, I fixed it.**

Please note: there is an error in the documentations, but it does not

seem to be related to my changes.

- Fix the current bug discussed in #844 in `test_ppo.py`.

- Add warning for `ActorProb ` if both `max_action ` and

`unbounded=True` are used for model initializations.

- Add warning for PGpolicy and DDPGpolicy if they find duplicate usage

of action-bounded actor and action scaling method.

Specifically, BasePolicy.set_eps seems to be a remnant from using DQN in

other examples.

* Removed unused functions (test_fn and train_fn) from the pettingzoo

example with PistonBall. These functions use set_eps which is not

available for PPO and is not even called once in the file.

- [ ] I have marked all applicable categories:

+ [x] exception-raising fix

+ [ ] algorithm implementation fix

+ [ ] documentation modification

+ [ ] new feature

- [ ] I have reformatted the code using `make format` (**required**)

- [ ] I have checked the code using `make commit-checks` (**required**)

- [ ] If applicable, I have mentioned the relevant/related issue(s)

- [ ] If applicable, I have listed every items in this Pull Request

below

I'm developing a new PettingZoo environment. It is a two players turns

board game.

```

obs_space = dict(

board = gym.spaces.MultiBinary([8, 8]),

player = gym.spaces.Tuple([gym.spaces.Discrete(8)] * 2),

other_player = gym.spaces.Tuple([gym.spaces.Discrete(8)] * 2)

)

self._observation_space = gym.spaces.Dict(spaces=obs_space)

self._action_space = gym.spaces.Tuple([gym.spaces.Discrete(8)] * 2)

...

# this cache ensures that same space object is returned for the same

agent

# allows action space seeding to work as expected

@functools.lru_cache(maxsize=None)

def observation_space(self, agent):

# gymnasium spaces are defined and documented here:

https://gymnasium.farama.org/api/spaces/

return self._observation_space

@functools.lru_cache(maxsize=None)

def action_space(self, agent):

return self._action_space

```

My test is:

```

def test_with_tianshou():

action = None

# env = gym.make('qwertyenv/CollectCoins-v0', pieces=['rock', 'rock'])

env = CollectCoinsEnv(pieces=['rock', 'rock'], with_mask=True)

def another_action_taken(action_taken):

nonlocal action

action = action_taken

# Wrapping the original environment as to make sure a valid action will

be taken.

env = EnsureValidAction(

env,

env.check_action_valid,

env.provide_alternative_valid_action,

another_action_taken

)

env = PettingZooEnv(env)

policies = MultiAgentPolicyManager([RandomPolicy(), RandomPolicy()],

env)

env = DummyVectorEnv([lambda: env])

collector = Collector(policies, env)

result = collector.collect(n_step=200, render=0.1)

```

I have also a wrapper that may be redundant as of Tianshou capability to action_mask, yet it is still part of the code:

```

from typing import TypeVar, Callable

import gymnasium as gym

from pettingzoo.utils.wrappers import BaseWrapper

Action = TypeVar("Action")

class ActionWrapper(BaseWrapper):

def __init__(self, env: gym.Env):

super().__init__(env)

def step(self, action):

action = self.action(action)

self.env.step(action)

def action(self, action):

pass

def render(self, *args, **kwargs):

self.env.render(*args, **kwargs)

class EnsureValidAction(ActionWrapper):

"""

A gym environment wrapper to help with the case that the agent wants to

take invalid actions.

For example consider a Chess game, where you let the action_space be any

piece moving to any square on the board,

but then when a wrong move is taken, instead of returing a big negative

reward, you just take another action,

this time a valid one. To make sure the learning algorithm is aware of

the action taken, a callback should be provided.

"""

def __init__(self, env: gym.Env,

check_action_valid: Callable[[Action], bool],

provide_alternative_valid_action: Callable[[Action], Action],

alternative_action_cb: Callable[[Action], None]):

super().__init__(env)

self.check_action_valid = check_action_valid

self.provide_alternative_valid_action = provide_alternative_valid_action

self.alternative_action_cb = alternative_action_cb

def action(self, action: Action) -> Action:

if self.check_action_valid(action):

return action

alternative_action = self.provide_alternative_valid_action(action)

self.alternative_action_cb(alternative_action)

return alternative_action

```

To make above work I had to patch a bit PettingZoo (opened a pull-request there), and a small patch here (this PR).

Maybe I'm doing something wrong, yet I fail to see it.

With my both fixes of PZ and of Tianshou, I have two tests, one of the environment by itself, and the other as of above.

Changes:

- Disclaimer in README

- Replaced all occurences of Gym with Gymnasium

- Removed code that is now dead since we no longer need to support the

old step API

- Updated type hints to only allow new step API

- Increased required version of envpool to support Gymnasium

- Increased required version of PettingZoo to support Gymnasium

- Updated `PettingZooEnv` to only use the new step API, removed hack to

also support old API

- I had to add some `# type: ignore` comments, due to new type hinting

in Gymnasium. I'm not that familiar with type hinting but I believe that

the issue is on the Gymnasium side and we are looking into it.

- Had to update `MyTestEnv` to support `options` kwarg

- Skip NNI tests because they still use OpenAI Gym

- Also allow `PettingZooEnv` in vector environment

- Updated doc page about ReplayBuffer to also talk about terminated and

truncated flags.

Still need to do:

- Update the Jupyter notebooks in docs

- Check the entire code base for more dead code (from compatibility

stuff)

- Check the reset functions of all environments/wrappers in code base to

make sure they use the `options` kwarg

- Someone might want to check test_env_finite.py

- Is it okay to allow `PettingZooEnv` in vector environments? Might need

to update docs?

This allows, for instance, to change the action registered into the

buffer when the environment modify the action.

Useful in offline learning for instance, since the true actions are in a

dataset and the actions of the agent are ignored.

- [ ] I have marked all applicable categories:

+ [ ] exception-raising fix

+ [ ] algorithm implementation fix

+ [ ] documentation modification

+ [X] new feature

- [X ] I have reformatted the code using `make format` (**required**)

- [X] I have checked the code using `make commit-checks` (**required**)

- [] If applicable, I have mentioned the relevant/related issue(s)

- [X] If applicable, I have listed every items in this Pull Request

below

As mentioned in #770 , I have fixed the mismatch of args between the Net

and MLP. Also, in order to initialize the norm_layers and activations,

norm_args and act_args are added to the miniblock and related classes.

This PR addresses #772 (updates Atari wrappers to work with new Gym API)

and some additional issues:

- Pre-commit was using gitlab for flake8, which as of recently requires

authentication -> Replaced with GitHub

- Yapf was quietly failing in pre-commit. Changed it such that it fixes

formatting in-place

- There is an incompatibility between flake8 and yapf where yapf puts

binary operators after the line break and flake8 wants it before the

break. I added an exception for flake8.

- Also require `packaging` in setup.py

My changes shouldn't change the behaviour of the wrappers for older

versions, but please double check.

Idk whether it's just me, but there are always some incompatibilities

between yapf and flake8 that need to resolved manually. It might make

sense to try black instead.

- [x] I have marked all applicable categories:

+ [ ] exception-raising fix

+ [x] algorithm implementation fix

+ [ ] documentation modification

+ [ ] new feature

- [x] I have reformatted the code using `make format` (**required**)

- [x] I have checked the code using `make commit-checks` (**required**)

- [x] If applicable, I have mentioned the relevant/related issue(s)

- [x] If applicable, I have listed every items in this Pull Request

below

While trying to debug Atari PPO+LSTM, I found significant gap between

our Atari PPO example vs [CleanRL's Atari PPO w/

EnvPool](https://docs.cleanrl.dev/rl-algorithms/ppo/#ppo_atari_envpoolpy).

I tried to align our implementation with CleaRL's version, mostly in

hyper parameter choices, and got significant gain in Breakout, Qbert,

SpaceInvaders while on par in other games. After this fix, I would

suggest updating our [Atari

Benchmark](https://tianshou.readthedocs.io/en/master/tutorials/benchmark.html)

PPO experiments.

A few interesting findings:

- Layer initialization helps stabilize the training and enable the use

of larger learning rates; without it, larger learning rates will trigger

NaN gradient very quickly;

- ppo.py#L97-L101: this change helps training stability for reasons I do

not understand; also it makes the GPU usage higher.

Shoutout to [CleanRL](https://github.com/vwxyzjn/cleanrl) for a

well-tuned Atari PPO reference implementation!

IMHO, unit tests, compared with integration tests or end-to-end tests or

other tests, often means something that only tests a single

method/function/class/etc, and often has a lot of stubs and mocks so it

is far from a typical/real usage scenario. On the other hand,

integration tests or e2e tests mock less and are more like the real

case.

Tianshou says:

> ... tests include the full agent training procedure for all of the

implemented algorithms

It seems that this is more than unit test, and falls into the category

of integration or even e2e tests.

## implementation

I implemented HER solely as a replay buffer. It is done by temporarily

directly re-writing transitions storage (`self._meta`) during the

`sample_indices()` call. The original transitions are cached and will be

restored at the beginning of the next sampling or when other methods is

called. This will make sure that. for example, n-step return calculation

can be done without altering the policy.

There is also a problem with the original indices sampling. The sampled

indices are not guaranteed to be from different episodes. So I decided

to perform re-writing based on the episode. This guarantees that the

sampled transitions from the same episode will have the same re-written

goal. This also make the re-writing ratio calculation slightly differ

from the paper, but it won't be too different if there are many episodes

in the buffer.

In the current commit, HER replay buffer only support 'future' strategy

and online sampling. This is the best of HER in term of performance and

memory efficiency.

I also add a few more convenient replay buffers

(`HERVectorReplayBuffer`, `HERReplayBufferManager`), test env

(`MyGoalEnv`), gym wrapper (`TruncatedAsTerminated`), unit tests, and a

simple example (examples/offline/fetch_her_ddpg.py).

## verification

I have added unit tests for almost everything I have implemented.

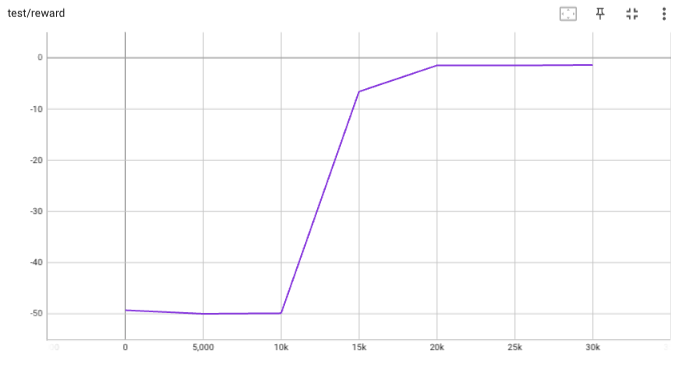

HER replay buffer was also tested using DDPG on [`FetchReach-v3`

env](https://github.com/Farama-Foundation/Gymnasium-Robotics). I used

default DDPG parameters from mujoco example and didn't tune anything

further to get this good result! (train script:

examples/offline/fetch_her_ddpg.py).

- This PR adds the checks that are defined in the Makefile as pre-commit

hooks.

- Hopefully, the checks are equivalent to those from the Makefile, but I

can't guarantee it.

- CI remains as it is.

- As I pointed out on discord, I experienced some conflicts between

flake8 and yapf, so it might be better to transition to some other

combination (e.g. black).

* Changes to support Gym 0.26.0

* Replace map by simpler list comprehension

* Use syntax that is compatible with python 3.7

* Format code

* Fix environment seeding in test environment, fix buffer_profile test

* Remove self.seed() from __init__

* Fix random number generation

* Fix throughput tests

* Fix tests

* Removed done field from Buffer, fixed throughput test, turned off wandb, fixed formatting, fixed type hints, allow preprocessing_fn with truncated and terminated arguments, updated docstrings

* fix lint

* fix

* fix import

* fix

* fix mypy

* pytest --ignore='test/3rd_party'

* Use correct step API in _SetAttrWrapper

* Format

* Fix mypy

* Format

* Fix pydocstyle.

fixes some deprecation warnings due to new changes in gym version 0.23:

- use `env.np_random.integers` instead of `env.np_random.randint`

- support `seed` and `return_info` arguments for reset (addresses https://github.com/thu-ml/tianshou/issues/605)