- [x] I have marked all applicable categories:

+ [ ] exception-raising fix

+ [x] algorithm implementation fix

+ [ ] documentation modification

+ [ ] new feature

- [x] I have reformatted the code using `make format` (**required**)

- [x] I have checked the code using `make commit-checks` (**required**)

- [x] If applicable, I have mentioned the relevant/related issue(s)

- [x] If applicable, I have listed every items in this Pull Request

below

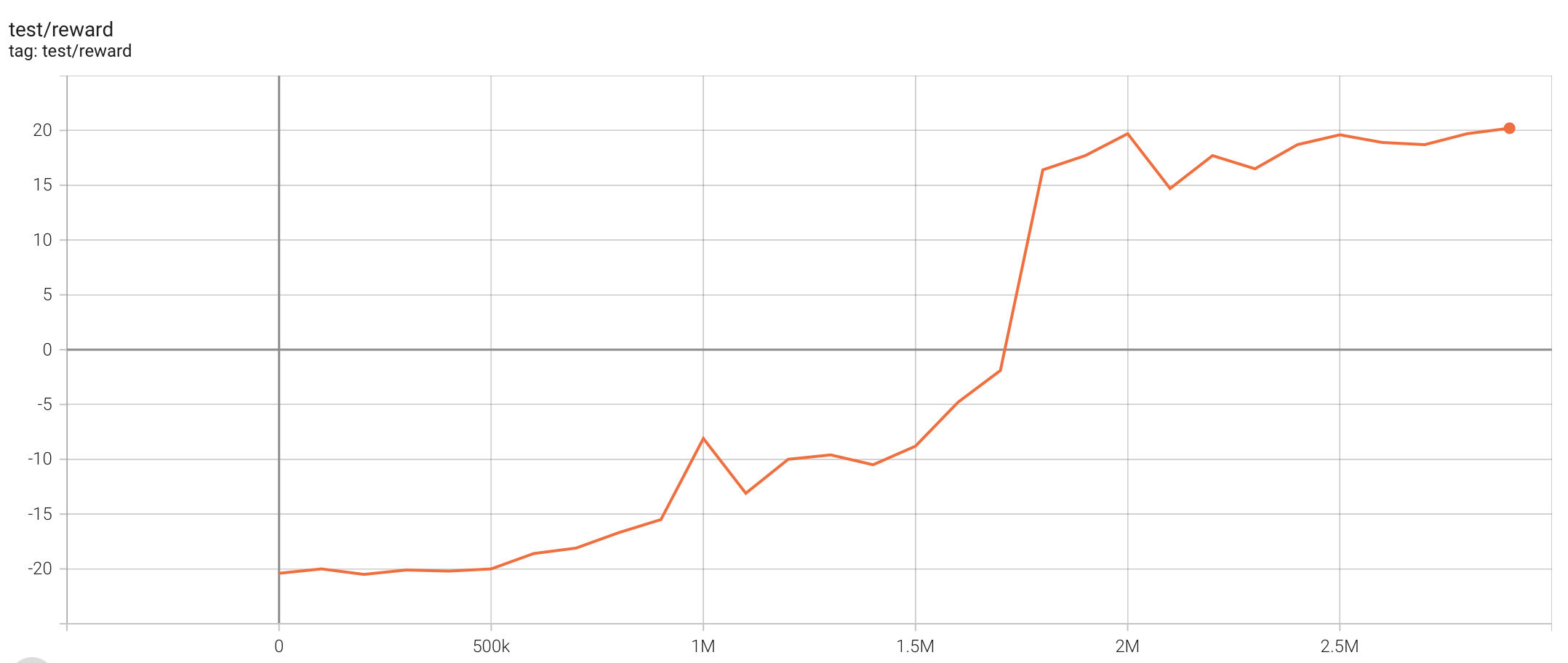

While trying to debug Atari PPO+LSTM, I found significant gap between

our Atari PPO example vs [CleanRL's Atari PPO w/

EnvPool](https://docs.cleanrl.dev/rl-algorithms/ppo/#ppo_atari_envpoolpy).

I tried to align our implementation with CleaRL's version, mostly in

hyper parameter choices, and got significant gain in Breakout, Qbert,

SpaceInvaders while on par in other games. After this fix, I would

suggest updating our [Atari

Benchmark](https://tianshou.readthedocs.io/en/master/tutorials/benchmark.html)

PPO experiments.

A few interesting findings:

- Layer initialization helps stabilize the training and enable the use

of larger learning rates; without it, larger learning rates will trigger

NaN gradient very quickly;

- ppo.py#L97-L101: this change helps training stability for reasons I do

not understand; also it makes the GPU usage higher.

Shoutout to [CleanRL](https://github.com/vwxyzjn/cleanrl) for a

well-tuned Atari PPO reference implementation!

111 KiB

2156x912px

111 KiB

2156x912px